Note that winners were not selected in all subcategories or in each place within each category.

Category 1: Survey - Probability

No prizes awarded

Category 1: Survey - Nonprobability

| Prize | Amount | Team Name | Team Members | Description |

|---|---|---|---|---|

| First Prize | $25,000 | MCHawks | Andrew Wheeler and Giovanni Circo | This entry proposes the use of Every Door Direct Mail address list as a sampling frame, along with an innovative use of multi-level regression with post-stratification (MRP). Postcards are mailed to addresses. The postcards have a URL and/or a QR code to allow access to a web-based survey. Entrants propose that MRP be used in conjunction with data from the American Community Survey to reweight survey estimates of average attitudes in microgeographies. This approach allows measurement of microlevel variation in perceptions. |

| Second Prize | $10,000 | Micro-Community Policing Plans | Jacqueline B. Helfgott Seattle Police Department/Brian Maxey Denver Police Department/ Commander Jacob Herrera Hello Lamp Post/Isabel Loos | This entry proposes to build on the Micro-Community Policing Plans neighborhood level, nonprobability survey methodology. A key innovation is the use of Hello Lamp Post to invite members of the public to engage in text-based mobile phone chats with the intention of reaching potential respondents who might not respond to traditionally fielded (e.g., mail or web-based) surveys. Hello Lamp Post employs signs with QR codes, that are placed in various public spaces, to engage place users in chats via their mobile phones. This submission presents a novel method of exposing potential respondents to the existence of a survey with the goal of increasing participation. |

| Third Prize | $5,000 | Johnston & Company | Britnee Johnston | This entry proposes using a geographically enabled web-based survey platform, ArcGIS Survey 123, in combination with existing email and physical address lists collected and maintained by city departments as a sampling frame. This submission represents a creative use of existing email and physical address lists to enable geographic specificity and reduce the cost of data collection. |

| Fourth Prize | $2,500 | Just Police | Shichun McCammond, Sara-Laure Faraji, and Derek Michael McCammond | This entry proposes to partner with each state’s Department of Motor Vehicles (DMV) to collect survey data when residents use the site for routine tasks such as renewing vehicle registrations. Surveys would be deployed via a chat and participation would be incentivized by a reduction in the DMV vehicle registration fee. Judges appreciated the creativity of proposing a partnership with a commonly used government agency to engage potential respondents as they go about a legally required activity and enable the development of estimates of perceptions for microgeographic areas across the entirety of each state. |

| Fifth Prize | $2,500 | Policing Accountability and Policy Evaluation Research Lab | Joshua McCrain, Kaylyn Jackson Schiff, Ian Adams, and Daniel S. Schiff | This entry proposes to use multi-level regression with post-stratification (MRP) and validates its use with a series of simulations. MRP is a robust methodology for generating small area estimations with relatively small sample sizes -- even in geographies without any survey responses. |

Category 2: Data - Overall

| Prize | Amount | Team Name | Team Members | Description |

|---|---|---|---|---|

| First Prize | $25,000 | Community PoliSense | Annie Chen, Cecilia Low-Weiner, Osama Qureshi, and Michael A. Keith, Jr. | This entry proposes to use data from social media (X, formerly Twitter), administrative sources (voter registration), and Census to uncover community perceptions at microgeographies. Twitter names and self-reported locations would be matched with voter registration street address data to obtain individual-level demographics. This sample of geolocated Twitter users can then be enriched with Census block group information. Tweets and shared articles are processed with text classifiers to add tags, which would be used to train another natural language processing (NLP) model to identify the sentiment of tweets and calculate user-level sentiment scores measuring perceptions for each of the five constructs. This replicable methodology represents an innovative approach to identifying and measuring community perceptions of public safety-related constructs at microgeographies. |

| Second Prize | $10,000 | Albrecht & Smirnova | Kat Albrecht and Inna Smirnova | This entry proposes to use a stratified sample of city-based comments from text-based discussion platforms (Reddit) and Natural Language Processing (NLP) as a proof of concept. The proposed approach would process city-level subreddits to generate measures of the five constructs. The innovative aspect of this submission is in the use of city-level subreddits and computational methods using several types of NLP analyses to reveal community perceptions of constructs. |

| Third Prize | $5,000 | ScienceCast | John Beverly and Andrew Jiranek | This entry proposes to leverage information in the Police Score Card Database (PCSD), Wikidata, and Twitter to measure community perception constructs. The PSCD is a nationwide database of policing indicators gathered from state and federal databases as well as media reports and public records requests. A framework describing relationships within these sources would be developed and used to inform the creation of a knowledge graph. The proposed method employs a variety of machine learning tools to process the data and sentiment analysis to quantify community perceptions of the constructs, highlighting novel strategies and tools for analyzing existing data regarding community perceptions. |

| Fourth Prize | $2,500 | Michelle Masters | Michelle Masters | This entry proposes a qualitative method called Photovoice that uses a combination of photos and text to gather data. Photovoice is often deployed in conjunction with Community Based Participatory Research (CBPR) when used at the community level. The five constructs would be used as a series of prompts. Participants would upload a photograph and descriptive text in response to each of the prompts. Microgeographies of photos could be identified by the submitter. Qualitative analysis software would be used to identify themes in the text. This entry proposes a novel design for soliciting community perceptions. |

Category 2: Data - Individual - Respect

No prizes awarded

Category 2: Data - Individual - Fear of Crime

Note that two first place, and no other, prizes were awarded.

| Prize | Amount | Team Name | Team Members | Description |

|---|---|---|---|---|

| First Prize | $5,000 | The Eagles | Ruilin Chen | This entry proposes to use data from Airbnb property listings and customer reviews, particularly those classified under the "location" subcategory, to assess and quantify levels of fear of crime within communities. Entrants propose the application of computational methods, with a primary reliance on Large Language Models (LLMs), to extract neighborhood-specific information from the Airbnb dataset. The proposed text analysis process includes text classification, sentiment analysis, and topic modeling. Both supervised and unsupervised LLM-based text mining techniques would be used. This proposal illustrates how an existing data set can be used to measure community fear of crime. |

| First Prize | $5,000 | Duddon Research | Shana M. Judge | This entry proposes measuring fear of crime by using a proxy measure comprising certain neighborhood and housing characteristics that local governments could compile from administrative records and publicly available sources. To test the proposed approach, the entry operationalizes the fear of crime construct by using residents’ perceptions of crime in their community, as reported in responses to questions from the U.S. Census Bureau’s biennial American Housing Survey. The entry then uses penalized regression models to determine which housing-related characteristics best predict whether residents agree that their neighborhoods have high levels of serious crime. Results using all available survey years indicate that nine distinct neighborhood features can be used to accurately predict residents’ perceptions of crime. Because local governments can readily measure these characteristics, the proxy measure could be used at frequent intervals and at different levels of geography. The entry illustrates how an existing national dataset may be used in a novel way to measure community-level fear of crime. |

Category 2: Data - Individual - Police Accountability

No prizes awarded

Category 2: Data - Individual - Community Policing

| Prize | Amount | Team Name | Team Members | Description |

|---|---|---|---|---|

| First Prize | $5,000 | Mood mappers | Shafaq Chaudhry, Murat Yuksel, and Nelson Roque | This entry proposes to adopt a citizen science methodology to measure community emotions using an application named "Mood Diary." Individuals would be asked to install the app on their smartphones. "Mood Diary" is specially designed to gather information about mood and stress levels using smartphone sensors, including accelerometers, GPS, and phone usage patterns, along with self-reported surveys. The proposal outlines methods to analyze stress data in real-time and introduces a "Mood Map" dashboard to track mood fluctuations within a city. This proposal offers the potential to enable examination of how changes in community policing strategies affect community mood. |

- I. Statement of the Problem

- II. Motivation for the Challenge

- III. Challenge Entries

- IV. Judging Criteria

- V. Other Rules and Conditions

- VI. Prize Disbursement and Challenge Winners

- VII. Contact Information

- VIII. References

I. Statement of the problem

Consistent, rigorous measurement of community perceptions provides police, city managers, advocates, and community members with a quantitative assessment of performance (Rosenbaum, Lawrence, Hartnett, McDevitt, & Posick, 2015) and is an essential ingredient in building community trust in law enforcement (La Vigne, Dwivedi, Okeke, & Erondu, 2014). Accurate measurement of community views can inform the development of new and more effective strategies for improving both police-community relations and public safety, as well as provide important feedback related to changes in policy and practice. Traditionally, law enforcement agencies have primarily relied upon largely subjective, qualitative input from community meetings or convenience sample-based citizen satisfaction surveys to obtain community feedback. However, people who live in places where crime and police presence are most intensive are less likely to participate in community meetings and surveys than those who reside in lower crime areas. As a result, general community surveys typically “over represent the views of affluent, educated, white people and under represent the experiences of people of color, particularly those residing in impoverished communities” (La Vigne et al., 2014, p. 1). Additionally, community surveys are often expensive, time-consuming, and unable to provide estimates at smaller geographies within municipalities. At the same time, there have been significant advances in data availability and use to model human behavior and quantify preferences and attitudes. However, these advances have yet to be widely applied within the criminal justice realm.

To address these issues, the National Institute of Justice's (NIJ) Measures of Community Perceptions Challenge (the Challenge) invites innovative methods for measuring community attitudes, perceptions, and/or beliefs about public safety. The methods submitted should have the following characteristics:

- Representative– entries accurately represent the characteristics of the community on the key dimensions of race, ethnicity, age, and gender.

- Cost effective – so that they can be deployed frequently to better understand patterns in changes in community perceptions.

- Accurate across microgeographies – produce accurate estimates across microgeographies (i.e., the smallest unit that does not reveal the identities of any individuals) to reveal differences in patterns of perceptions.

- Frequent – capable of low-burden administration on a systematic basis.

- Scalable – able to be successfully deployed in jurisdictions of varying sizes.

II. Motivation for the Challenge

Myriad approaches for measuring community perceptions exist. Surveys are the most frequently used method, but societal and especially technological changes over the last 20 years have radically altered survey sampling, administration, and response rates (Couper, 2017). The transition from landline telephones to mobile telephones meant that telephones could not be tied to specific geographic locations. The implication for surveys was that random digit dialing (RDD) became ineffective at linking respondents to geographies. Additionally, caller identification (caller ID) and spam filter apps allow mobile telephone users to screen calls and caused response rates to plummet. These developments sparked a shift from RDD to address-based lists. As a result, survey modes also shifted from telephone surveys to self-administered surveys (mail or internet). But the low response rates across these modes coupled with the relatively low cost of internet surveys has renewed interest in the use of nonprobability sampling and reopened the debate over probability versus nonprobability sampling methods (Couper, 2017). Importantly, these changes have been experienced by surveys in general, not only those about crime and criminal justice.

Probability surveys have long been considered the gold standard in survey methodology. For general population surveys, a random sample is drawn from a list of the target population. This list typically consists of addresses (address-based sampling) or telephone numbers (random digit dialing). As the number of surveys of all kinds has increased and spread across more dimensions of everyday life, it has become increasingly difficult to get sampled individuals or households to participate. Response rates have fallen dramatically even for robust, well-run, and widely recognized surveys.[1] As a result, probability surveys are more expensive and time-consuming and, to the extent that nonrespondents are systematically different from respondents on variables of interest, more vulnerable to nonresponse error (Lynn, 2008), which is especially prevalent in population surveys (Groves & Peytcheva, 2008).

Because of the above issues associated with probability sampling, the use of nonprobability surveys, especially over the internet, has proliferated. Common nonprobability methods include convenience/purposive sampling, quota sampling, on-line panels, and snowball sampling. Nonprobability surveys can be administered via the internet, in person, over the telephone, or using text-based surveys via mobile phones (Couper, 2017).

At the same time, new methods have emerged that leverage the increased accessibility of big data sets to gauge community perceptions (e.g., social media monitoring, automated text analysis, and data brokerage). Tools such as natural language processing and machine learning continue to develop and offer new possibilities for synthesizing data describing community member experience with and trust in the police. Indeed, these tools are being employed to measure opinions and sentiments in other contexts.[2] Importantly, the mining of big data has the potential to provide near-real-time information regarding attitudes toward and experiences with the police that may arise from police reforms and community engagement initiatives. However, these big data methods have notable challenges. Social media data may not be representative of the entire community and may be biased towards certain groups, leading to inaccurate or skewed perceptions of community attitudes. Data brokering has substantial civil liberties and privacy concerns, particularly around the collection and use of personal data. And automated text analysis is prone to misinterpretation and lack of context. The very use of big data methods by law enforcement agencies may erode trust between law enforcement and the community if people are not aware of how their data are being used or if people believe their privacy is being violated. Concerns over the misuse of online data may have a chilling effect on free speech and expression, particularly if people believe their social media posts are being monitored and analyzed by law enforcement agencies.

As an interdisciplinary research arm of the U.S. Department of Justice, NIJ invests in scientific research across disciplines to serve the needs of the criminal justice community. The Challenge format is particularly well-suited to encouraging the development and testing of innovative ideas that leverage solutions from a wide range of scientific fields to help solve difficult research and technological problems, such as accurately measuring community perceptions. To that end, this Challenge invites participants to submit detailed methods for measuring community sentiments that are representative, cost effective, accurate across microgeographies, and capable of being administered frequently.

III. Challenge Entries

All Challenge entries must demonstrate appropriate knowledge of applicable datasets and methods. Entries from disciplines outside of criminal justice or involving interdisciplinary teams are encouraged. NIJ welcomes entries from practitioners, researchers, public and private entities, research laboratories, startup companies, students, and others.

III.a. Requirements

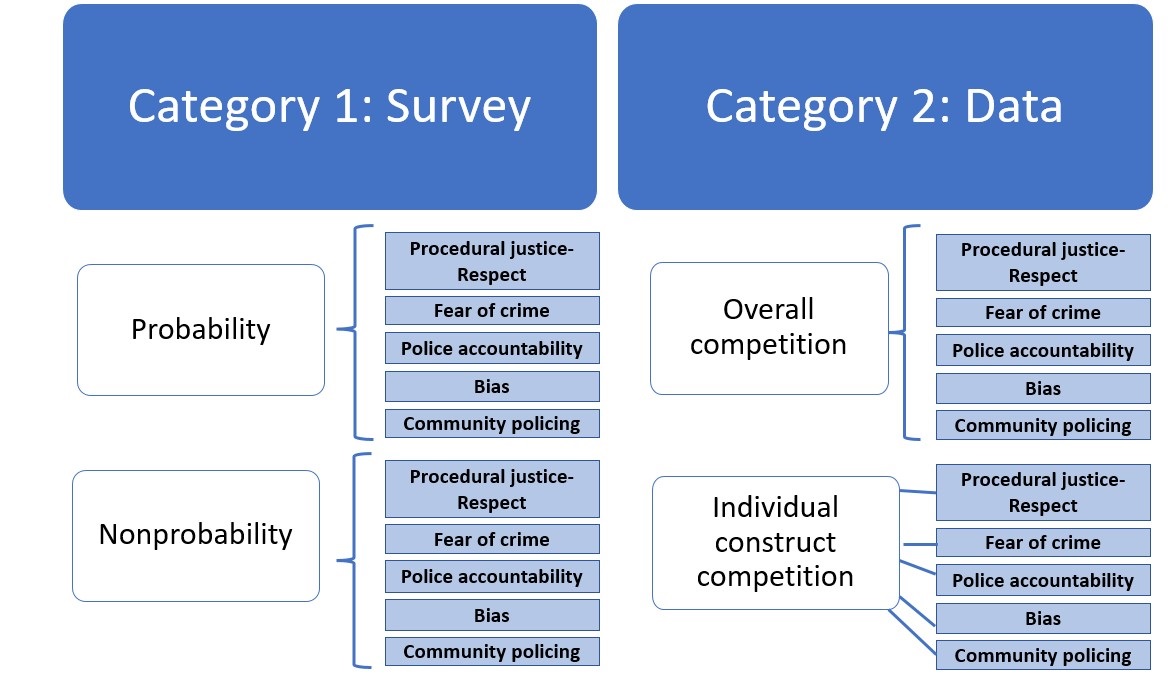

This is an open competition to develop new methods for capturing community perceptions of police and public safety. To facilitate comparing entries using similar methods, entries will be accepted under two categories; each category measures the same five constructs (see Figure 1). Category 1 is for approaches that use a survey instrument, regardless of whether the sampling design is probability, nonprobability, or a combination of the two. Solutions that solely rely upon law enforcement contact surveys will not be considered. Category 2 is for approaches that use non-survey instrument methods to measure constructs. For example, those that gather information without direct interaction with community members via social media data (e.g., through sentiment analysis, natural language processing, and automatic summarization), administrative data, and other proxy data, perhaps combined with mobile telephone data, or some combination of the above, to gather accurate, cost-effective measures of community perceptions.

III.b. Constructs and Survey Questions for Category 1 Entries

All Category 1 entries will measure the following five constructs using a survey with the questions provided below in Table 1. The intent of the competition is to focus upon the proposed method rather then the development of the survey questions. All contestants should use the same questions provided in Table 1. Contestants must provide a detailed description of their approach.

| Construct | Definition | Survey question |

|---|---|---|

| Procedural Justice - Respect | Perception that police are respectful when interacting with members of the public | Police in my neighborhood treat people with dignity and respect (response options: strongly disagree, disagree, neither agree nor disagree, agree, strongly agree) |

| Fear of crime | Perception of personal safety | Is there any area near where you live -- that is, within a mile -- where you would be afraid to walk alone at night? (response options: Yes, No) |

| Police accountability | Perception that the police department holds officers accountable for misconduct | The police department holds officers accountable for wrong or inappropriate conduct in the community (response options: strongly disagree, disagree, neither agree nor disagree, agree, strongly agree) |

| Bias | Perception that police are biased | The police act based on personal prejudices and biases (response options: strongly disagree, disagree, neither agree nor disagree, agree, strongly agree) |

| Community policing | Perception that the police department and community members have shared public safety interests | The police department is responsive to community concerns (response options: strongly disagree, disagree, neither agree nor disagree, agree, strongly agree) |

III.c. Constructs for Category 2 Entries

Contestants in Category 2 may submit to either the overall competition or the individual construct competition. If applicants do compete in more than one subcategory, the entries need to be unique. Uniqueness of entries will be determined by the judges. Contestants in the individual construct competition may provide entries to more than one construct but are limited to one entry for each construct. Entries in Category 2 must identify the proxy measure and the existing data source(s) they would leverage for each of the constructs and all pertinent metadata (e.g., who owns the data, how regularly is it updated, representativeness of the data, etc.). Entries must discuss the limitations, accuracy, biases, and potential harms in the proposed proxy measures across the community, particularly among marginalized groups. Entries should identify steps to mitigate any limitations and biases, such as using multiple data sources, validating the data and proxy measures through multiple methods, or discussing how the data might be used in conjunction with survey information.

| Construct | Definition |

|---|---|

| Procedural Justice - Respect | Perception that police are respectful when interacting with members of the public |

| Fear of crime | Perception of personal safety |

| Police accountability | Perception that the police department holds officers accountable for misconduct |

| Bias | Perception that police are biased |

| Community policing | Perception that police department and community members have shared public safety interests |

III.d. How to Enter

Contestants must submit entries via the “Innovations in Measuring Community Perceptions” announcement during the Challenge submission period. Entries must be made through the challenge submission form.

The Challenge submission period is from 12:00 a.m. (ET) May 16, 2023, through 11:59 p.m. (ET) July 31, 2023. Registration and entry are free. Individual and team entries are acceptable.

The Challenge will use a two-category submission process. All Category 1 entries will measure the same constructs of community perceptions (see section III.b.) and should be submitted under the probability survey competition or nonprobability survey competition sub-categories. Category 1 entries proposing a combination of probability and nonprobability survey methods should b submitted under the nonprobability sub-category. All Category 2 entries (see section III.c.) should be submitted under either a) the overall competition or b) the individual construct competition. Individual construct entries should clearly identify which construct is being measured as well as the proposed measure.

All entries are final. Once an entry is submitted, no changes may be made.

III.e. Submission Requirements

All entries shall include:

- A Team Roster (even if a single individual) listing each participant’s full legal name, email (only for prize notification), and percentage of prize money for that participant (not counted against the 12-page limit). The Team Roster must indicate the proportional amount of any prize to be paid to each member. Each team member must sign the Team Roster next to their name. For example: John Q. Public ________(Signature), ______ (X%). Team entries that do not submit a Team Roster signed by each member of the team will be disqualified.

- A narrative not exceeding 12 pages (12 pt. font; double spaced), submitted in any of the following formats: .txt, .doc, .docx, .rtf, .odt, .odf, or PDF. Any entry exceeding the page limit will be disqualified.

- An appendix that only contains the following information and will not be counted against the 12-page limit:

- A bibliography.

- A budget document that contains a clear, detailed, and well-justified accounting of the anticipated cost to implement the proposed survey or measure(s).

Additional sections, not listed in the submission requirements, will count towards the 12-page narrative limit.

If an applicant makes more than one submission in the same subcategory and with the same information as another submission, only the most recent submission will be accepted, and all prior submissions will be disqualified.

III.f. Important Dates and Times

Submission and selection dates:

- Submission period begins: May 16, 2023

- Submission period ends: July 31, 2023, 11:59 pm ET

- All Winners Announced: November 2023 (anticipated)

Submission requirements and dates may be updated during the Challenge.

III.g. Judges

Challenge submissions will be judged by a distinguished panel of reviewers with expertise in one or more of the following areas: criminal justice, data science, and survey methodology. Entries will be judged according to the criteria listed in sections IV.a. and IV.b. Reviewer ratings and recommendations are advisory.

IV. Judging Criteria

Entries should provide detailed descriptions of how the proposed approach meets each of the requirements. To the extent possible, the description of each criterion should include any evidence that exists to support claims about the proposed methodology. If no evidence exists, entries should provide a coherent, plausible, theoretically based argument in support of the approach used. Evidence that the proposed method has been used successfully in analogous scenarios will strengthen proposals. Regardless of category, each entry must provide the following:

- A detailed overview of the proposed method(s). Inclusion of proof-of-concept results and/or results from the analogous use of proposed methods is encouraged.

- A narrative that addresses to what extent the proposed method(s) satisfies each of the requirements (see sections IV.a. and IV.b).

IV.a. Category 1 Judging Criteria

The survey methods should be described completely, including data sources, internal and external validity, ordering of steps, means of data collection, and sampling frame (if relevant).

The following criteria will be used to judge Challenge entries:

- Representativeness (20%) – Includes detailed information describing data and analytical techniques for determining the degree to which the data used in the methodology represent the target population by microgeography. Strategies for both reducing and quantifying error should be addressed (e.g., coverage error, nonresponse error, etc.) as appropriate to the proposed method. For example, representativeness can be estimated by comparing aggregate percentages from the survey to a benchmark from non-survey data, such as the decennial census on the aggregate demographics of respondents. Other datasets may be used in addition to census data. Submissions must also include specific process and outcome measures which can be used to accurately measure representativeness. Category 1 entries proposing a probability survey should use the Total Survey Error approach (Biemer, 2010; Biemer et al., 2017). Those proposing a nonprobability approach should draw from a causal inference framework (Cornesse et al., 2020; Mercer et al., 2017). Such an approach emphasizes the identification of likely confounders during the design phase so that they can be measured and possibly minimized (Mercer et al., 2017).

- Cost (20%) – Clear and specific description of all costs related to deploying the method including a total cost per data collection. For Category 1 entries, a publication sponsored by the Bureau of Justice Statistics offers clear examples of how to quantify survey costs (Edwards, Giambo, & Kena, 2020, pp. 16-17). All costs should be reported per capita assuming a study area of 100,000 population.

- Accuracy across microgeographies (20%) – Clear and specific description of how entrants plan to establish the accuracy of results across microgeographies (see Requirement A). The smallest geography for which the methods are accurate must be clearly defined (such as census block group, census block, or street segment).

- Capable of frequent administration (20%) – Clear explanation of methodology’s ability to support frequent data collection of at least once every six months. Estimates of the duration of time from beginning to end of data collection as well as descriptive data analysis should be included as separate items.

- Scalability (15%) – Clear rationale describing the likelihood that the proposed methodology could be successfully deployed in cities of varying sizes and demographic structure. Success can be measured by achieving the same accuracy in terms of cost per capita.

- Human subjects protection/privacy (5%) – All entries should address how to measure community perceptions while protecting the identities of community members.

IV.b. Category 2 Judging Criteria

The methods should be described completely including data sources, internal and external validity, specific steps involved and their order, and means of data collection. Entries to the overall competition should include a measure for each of the five constructs. Each individual construct entry should address a single construct. Category 2 entries must provide:

- Identification of the target population.

- A clear indication of the proxy measures to be used for each construct (see sections III.b. and III.c.). A complete justification of the construct operationalization for each measure in the proposed model(s).

- A discussion of data sources, data fusion, data imputation, and data analysis.

- A discussion of potential sources of bias in the method and the data, as well as strategies for reducing and quantifying bias.

The following criteria will be used to judge Challenge entries:

- Representativeness – How well does the target population represent the entire community? How well do the proxy measures represent the construct measures? Includes detailed information describing data and analytical techniques for determining the degree to which the data represent the target population and the degree to which the target population represents the entire community. (20%)

- Cost – Clear and specific description of all costs related to collecting and analyzing the data including a total cost per data collection. All costs should be reported per capita assuming a study area of 100,000. Estimates of all upfront implementation costs and sustaining costs should be provided and justified. (20%)

- Accuracy across microgeographies – Clear and specific description of how entrants plan to establish and validate the accuracy of results across microgeographies (see Requirement A). The smallest geography for which the methods are accurate must be clearly defined (such as census block group, census block, or street segment). A discussion of biases and the mitigation of those biases should be included. (20%)

- Sustainability – Clear explanation of methodology’s ability to support frequent data collection of at least once every six months. Estimates of the duration of time it will take to collect data, if not continuous, as well as descriptive data analysis, should be included as separate items. (20%)

- Scalability – Clear rationale describing the likelihood the proposed methodology could be successfully deployed in cities of varying sizes and demographic structure. Success can be measured by achieving the same accuracy in terms of cost per capita. (15%)

- Human subjects protection/privacy – All entries should address how to measure community perceptions while protecting the identities of community members and mitigating community surveillance and privacy concerns that could further erode community trust in law enforcement. Entries should discuss methods for anonymizing the data, if applicable. (5%)

IV.c. Prizes

A total of $175,000, a total of $85,000 for each of category 1 and 2, is available to be divided between the two categories as shown in tables 3-4.

| Category | First Prize | Second Prize | Third Prize | Fourth and Fifth Prizes |

|---|---|---|---|---|

| Probability survey competition | $25,000 | $10,000 | $5,000 | $2,500 |

| Nonprobability survey competition | $25,000 | $10,000 | $5,000 | $2,500 |

| Category | First Prize | Second Prize | Third Prize | Fourth and Fifth Prizes |

|---|---|---|---|---|

| Addresses all five constructs | $25,000 | $10,000 | $5,000 | $2,500 |

| Category | First Prize | Second Prize | Third Prize | Fourth and Fifth Prizes |

|---|---|---|---|---|

| Construct 1 | $5,000 | $2,000 | $1,000 | $500 |

| Construct 2 | $5,000 | $2,000 | $1,000 | $500 |

| Construct 3 | $5,000 | $2,000 | $1,000 | $500 |

| Construct 4 | $5,000 | $2,000 | $1,000 | $500 |

| Construct 4 | $5,000 | $2,000 | $1,000 | $500 |

All final award decisions will be made by the NIJ Director, who may take into account the judges’ review and NIJ staff recommendations. Prizes will be awarded at the end of the Challenge. Any contestant who wins multiple prizes will receive one prize payment for the total amount won. If the NIJ Director determines that no entry is deserving of an award, no prizes will be awarded.

Subject to the availability of appropriated funds and to any modifications or additional requirements that may be imposed by law, the contestants submitting a winning solution may be invited to compete for additional funding to pilot their solution.

V. Other Rules and Conditions

V.a. Submission Period

The Challenge Submission Period ends on July 31, 2023, 11:59 p.m. ET. Entries submitted after the designated Submission Periods will be disqualified and will not be reviewed.

V.b. Eligibility

The Challenge is open to: (1) individual residents of the 50 United States, the District of Columbia, Puerto Rico, the U.S. Virgin Islands, Guam, and American Samoa[3] who are at least 13 years old at the time of entry; (2) teams of eligible individuals; and (3) corporations or other legal entities (e.g., partnerships or nonprofit organizations) that are domiciled in any jurisdiction specified in (1). Entries by contestants under the age of 18 must include the co-signature of the contestant’s parent or legal guardian. Contestants may submit or participate in the submission of only one entry. Employees of NIJ and individuals or entities listed on the Federal Excluded Parties list (available from SAM.gov) are not eligible to participate. Employees of the Federal Government should consult with the Ethics Officer at their place of employment prior to submitting an entry for this Challenge. The Challenge is subject to all applicable federal laws and regulations. Submission of an entry constitutes a contestant’s full and unconditional agreement to all applicable rules and conditions. Eligibility for the prize award(s) is contingent upon fulfilling all requirements set forth herein.

V.c. General Warranties and Conditions

- Release of Liability: By entering the Challenge, each contestant agrees to: (a) comply with and be bound by all applicable rules and conditions, and the decisions of NIJ, which are binding and final in all matters relating to this Challenge; (b) release and hold harmless NIJ and any other organizations responsible for sponsoring, fulfilling, administering, advertising, or promoting the Challenge and all of their respective past and present officers, directors, employees, agents, and representatives (collectively, the “Released Parties”) from and against any and all claims, expenses, and liability arising out of or relating to the contestant’s entry or participation in the Challenge, and/or the contestant’s acceptance, use, or misuse of the prize or recognition.

The Released Parties are not responsible for: (a) any incorrect or inaccurate information, whether caused by contestants, printing errors or by any of the equipment or programming associated with, or utilized in, the Challenge; (b) technical failures of any kind, including, but not limited to malfunctions, interruptions, or disconnections in phone lines or network hardware or software; (c) unauthorized human intervention in any part of the entry process or the Challenge; (d) technical or human error which may occur in the administration of the Challenge or the processing of entries; or (e) any injury or damage to persons or property which may be caused, directly or indirectly, in whole or in part, from contestant's participation in the Challenge or receipt or use or misuse of any prize. If for any reason a contestant's entry is confirmed to have been erroneously deleted, lost, or otherwise destroyed or corrupted, contestant's sole remedy is to submit another entry in the Challenge so long as the resubmitted entry is prior to the deadline for submissions. - Termination and Disqualification: NIJ reserves the authority to cancel, suspend, and/or modify the Challenge, or any part of it, if any fraud, technical failures, or any other factor beyond NIJ’s reasonable control impairs the integrity or proper functioning of the Challenge, as determined by NIJ in its sole discretion. NIJ reserves the authority to disqualify any contestant it believes to be tampering with the entry process or the operation of the Challenge or to be acting in violation of any applicable rule or condition. Any attempt by any person to undermine the legitimate operation of the Challenge may be a violation of criminal and civil law, and, should such an attempt be made, NIJ reserves the authority to seek damages from any such person to the fullest extent permitted by law. NIJ’s failure to enforce any term of any applicable rule or condition shall not constitute a waiver of that term.

- Intellectual Property: By entering the Challenge, each contestant warrants that (a) they are the author and/or authorized owner of the entry; (b) that the entry is wholly original with the contestant (or is an improved version of an existing solution that the contestant is legally authorized to enter in the Challenge); (c) that the submitted entry does not infringe any copyright, patent, or any other rights of any third party; and (d) that the contestant has the legal authority to assign and transfer to NIJ all necessary rights and interest (past, present, and future) under copyright and other intellectual property law, for all material included in the Challenge entry that may be held by the contestant and/or the legal holder of those rights. Each contestant agrees to hold the Released Parties harmless for any infringement of copyright, trademark, patent, and/or other real or intellectual property rights, which may be caused, directly or indirectly, in whole or in part, from contestant’s participation in the Challenge.

- Publicity: By entering the Challenge, each contestant consents, as applicable, to NIJ’s use of their name, likeness, photograph, voice, and/or opinions, and disclosure of their hometown and state for promotional purposes in any media, worldwide, without further payment or consideration.

- Privacy: Personal and contact information submitted through the challenge submission form (and associated DOJ/OJP platforms) is not collected for commercial or marketing purposes. Information submitted throughout the Challenge will be used only to communicate with contestants regarding entries and/or the Challenge.

- Compliance With Law: By entering the Challenge, each contestant guarantees that the entry complies with all federal and state laws and regulations.

VI. Prize Disbursement and Challenge Winners

VI.a. Prize Disbursement and Requirements

Prize winners must comport with all applicable laws and regulations regarding prize receipt and disbursement. For example, NIJ is not responsible for withholding any applicable taxes from the award.

VI.b. Specific Disqualification Rule

If the announced winner(s) of the Challenge prize is found to be ineligible or is disqualified for any reason listed under “Other Rules and Conditions: Eligibility,” NIJ may make the award to the next runner(s) up, as previously determined by the NIJ Director.

VI.c. Rights Retained by Contestants, Challenge Winners, and NIJ

- All legal rights in any materials or products submitted in entering the Challenge are retained by the contestant and/or the legal holder of those rights. Entry in the Challenge constitutes

express authorization for NIJ staff to review and analyze any and all aspects of submitted entries. - Upon acceptance of any Challenge prizes, the winning contestant(s) agrees to allow NIJ to post, on NIJ’s website, the proposed methodology. This will allow others to benefit.

- NIJ reserves a royalty-free, non-exclusive, and irrevocable license to reproduce, publish, or otherwise use, and authorize others to use (in whole or in part, including in connection with derivative works), for federal purposes all work and intellectual property submitted in any prize-winning Challenge entry.

VII. Contact Information

All substantive questions pertaining to the Challenge must be submitted by June 2nd at 11:59pm to [email protected] and all answers will be publicly posted on the Challenge website by June 16th. Questions of a technical nature (e.g., challenges uploading submissions) may be submitted anytime to [email protected] and will be responded to as soon as possible.

Prior to emailing, please first read through "III.d. How to Enter" and the detailed registration and submission instructions.

VIII. References

Biemer, P. P. (2010). Total Survey Error: Design, Implementation, and Evaluation. Public Opinion Quarterly, 74(5), 817-848. doi:10.1093/poq/nfq058

Biemer, P. P., de Leeuw, E. D., Eckman, S., Edwards, B., Kreuter, F., Lyberg, L. E., West, B. T. (2017). Total Survey Error in Practice : Improving Quality in the Era of Big Data. Somerset, United States: John Wiley & Sons, Incorporated.

Bureau of Justice Statistics. (2021). National Crime Victimization Survey (NCVS). Retrieved from https://bjs.ojp.gov/data-collection/ncvs.

Couper, M. P. (2017). New Developments in Survey Data Collection. Annual Review of Sociology, 43(1), 121-145. doi:10.1146/annurev-soc-060116-053613

Edwards, W. S., Giambo, W. P., & Kena, W. G. (2020). National Crime Victimization Survey Local-Area Crime Survey Kit. Retrieved from Washington, DC: https://bjs.ojp.gov/sites/g/files/xyckuh236/files/media/document/ncvslacsk.pdf

Groves, R. M., & Peytcheva, E. (2008). The Impact of Nonresponse Rates on Nonresponse Bias. Public Opinion Quarterly, 72(2), 167-189. Doi.org/10.1093/poq/nfn011

La Vigne, N., Dwivedi, A., Okeke, C., & Erondu, N. (2014). Community Voices: A Participatory Approach for Measuring Resident Perceptions of Police and Policing. Retrieved from Washington, DC: https://www.urban.org/sites/default/files/publication/102430/community-voices-a-participatory-approach-for-measuring-resident-perceptions-of-police-and-policing.pdf

Lynn, P. (2008). The Problem of Nonresponse In E. D. de Leeuw, J. Hox, & D. Dillman (Eds.), International Handbook of Survey Methodology (1st ed., pp. 35-55). New York: Taylor and Francis.

Rosenbaum, D., Lawrence, D., Hartnett, S., McDevitt, J., & Posick, C. (2015). Measuring procedural justice and legitimacy at the local level: the police–community interaction survey. Journal of Experimental Criminology, 1-32. doi:10.1007/s11292-015-9228-9